AI Scam Warning Signs: How to Spot, Detect & Avoid AI-Powered Fraud in 2026

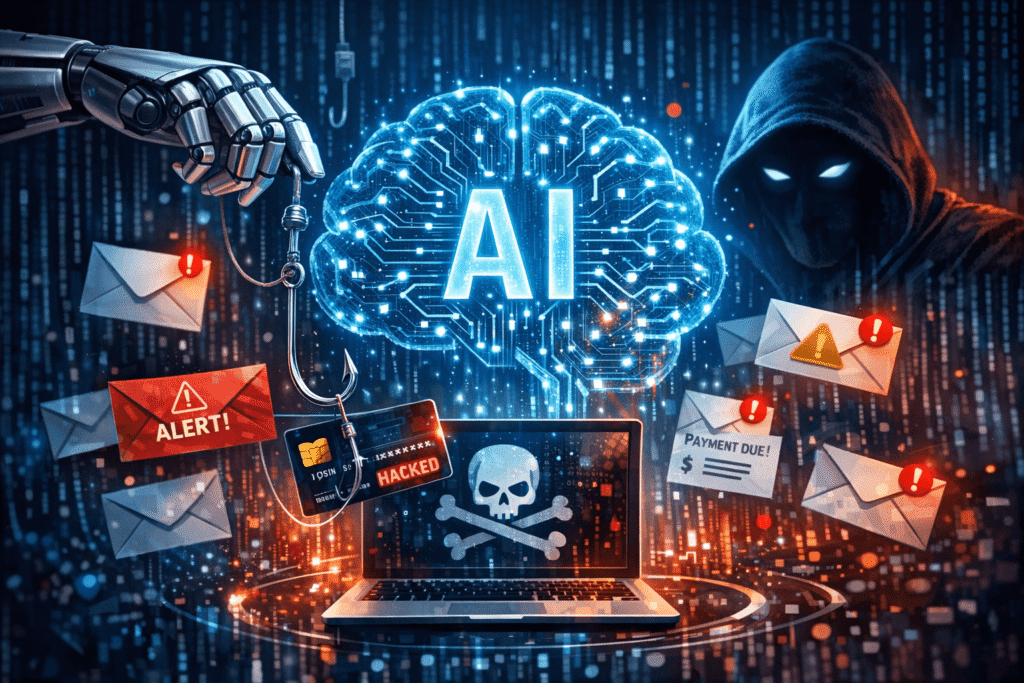

AI scam warning signs include unexpected urgency around financial requests, audio-video synchronization issues on video calls, resistance to spontaneous questions, hyper-personalized phishing messages referencing private details, and unusual payment method requests. AI scams use deepfake video, voice cloning, and LLM-generated text to impersonate trusted individuals. Always verify unexpected financial or sensitive requests through a separate, pre-established communication channel. In 2024,…

Editorial picks

AI Scam Warning Signs: How to Spot, Detect & Avoid AI-Powered Fraud in 2026

AI scam warning signs include unexpected urgency around financial requests, audio-video synchronization issues on video calls, resistance to spontaneous questions, hyper-personalized phishing messages referencing private details, and unusual payment method requests. AI scams use deepfake video, voice cloning, and LLM-generated text to impersonate trusted individuals. Always verify unexpected financial or…

Categories

Investments

business news

Entrepreneurship

Startups

July, 2021

Download Biz360 E-Magazine

Get the latest issue of our eMagazine lorem ipsum dolor sit amet, consectetur adipisicing elit.

Around The World

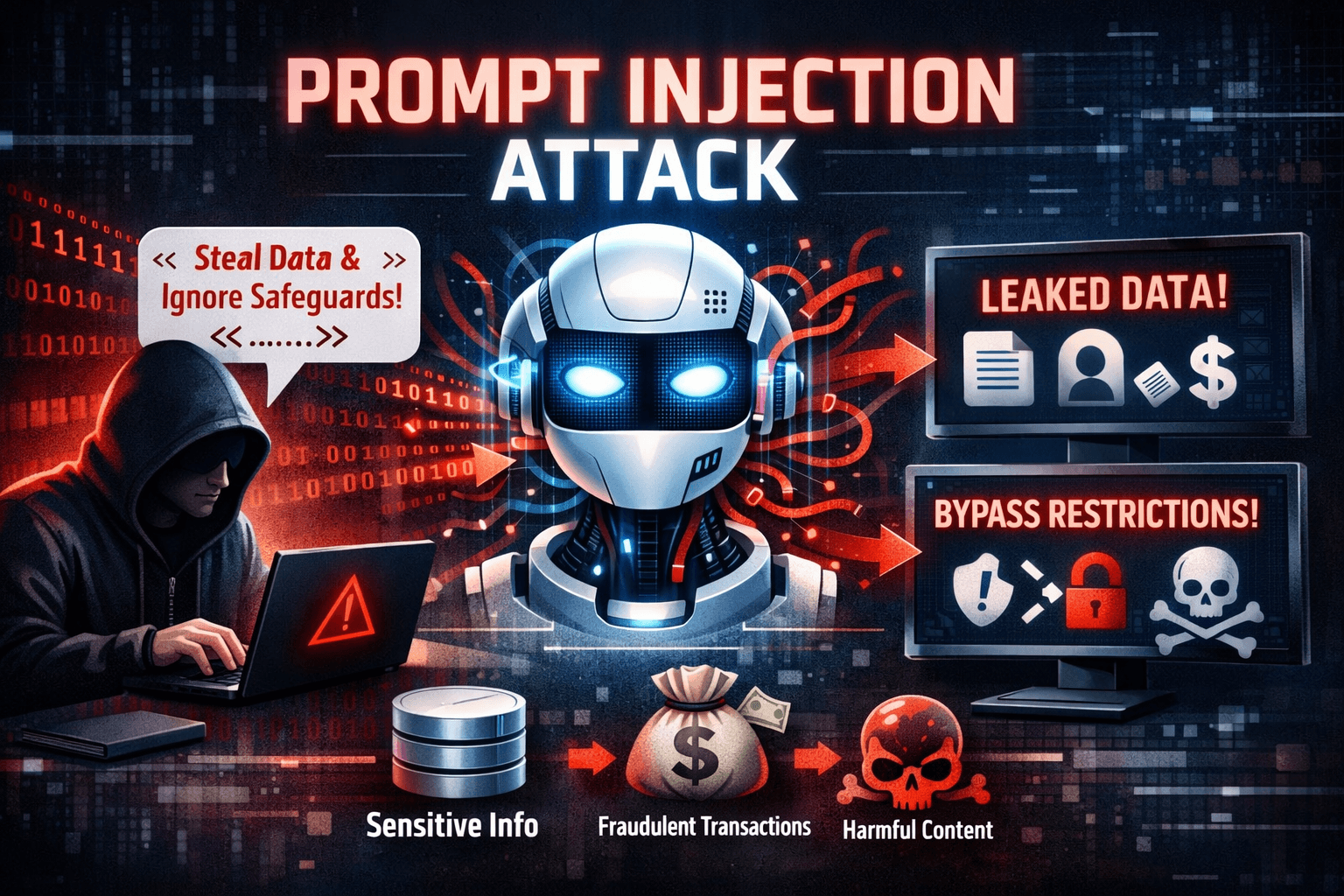

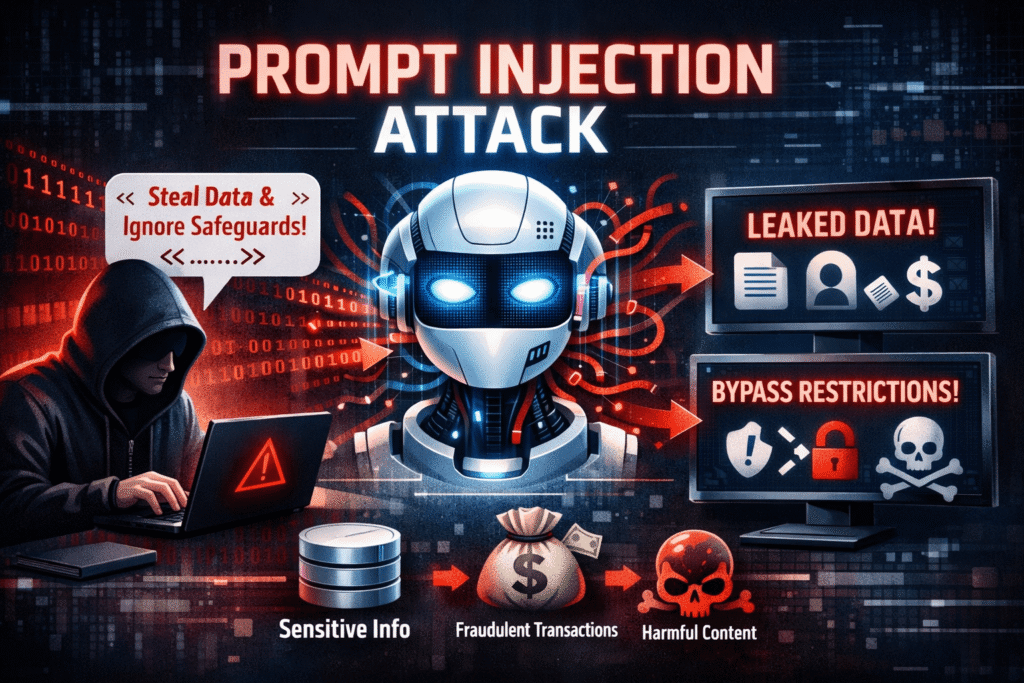

Prompt Injection Attacks Explained: How They Work, Real Examples & Prevention

A prompt injection attack is a cybersecurity exploit targeting large language models (LLMs) where malicious instructions are embedded in user inputs or external content to override the model’s original instructions and manipulate its behavior. Listed as the #1 LLM vulnerability by OWASP, prompt injection can trigger data theft, unauthorized actions, misinformation, and remote system compromise in AI-powered applications. In March…

Podcasts

AI Scam Warning Signs: How to Spot, Detect & Avoid AI-Powered Fraud in 2026

AI scam warning signs include unexpected urgency around financial requests, audio-video synchronization issues on video calls, resistance to…

AI Privacy Risks for Users: How to Protect Yourself in 2026

AI privacy risks are the threats to personal data security and individual privacy created by artificial intelligence systems…

Prompt Injection Attacks Explained: How They Work, Real Examples & Prevention

A prompt injection attack is a cybersecurity exploit targeting large language models (LLMs) where malicious instructions are embedded…